Skills, MCP, and the Orchestration Gap Nobody's Fixing

Agent skills became an open standard. MCP connects everything. But the layer between them, the one that keeps agents from failing catastrophically in production, barely exists.

Six months ago, teaching an AI agent a new capability meant writing a system prompt and praying. Today, I drop a SKILL.md file into a folder and it works across Claude Code, Codex, Gemini CLI, Cursor, and 20+ other agents unchanged.

That shift happened fast. What hasn't kept pace is everything underneath.

The skills standard nobody expected

In October 2025, Anthropic introduced Skills for Claude. By December, they open-sourced the standard at agentskills.io. Within weeks, OpenAI and Microsoft adopted it. Google's Gemini CLI followed in January 2026.

The adoption timeline tells you something important: everyone was waiting for someone to go first.

| Date | Milestone |

|---|---|

| Oct 2025 | Anthropic introduces Skills for Claude |

| Dec 2025 | Open standard published at agentskills.io |

| Late Dec 2025 | OpenAI and Microsoft adopt SKILL.md |

| Jan 2026 | Google Gemini CLI support, skills.sh launches |

A skill is a folder. At minimum, it contains a SKILL.md with YAML frontmatter (name and description) and markdown instructions. Optionally, it includes scripts, reference docs, and assets. The agent reads the frontmatter to decide if the skill is relevant, then lazy-loads the full instructions only when needed.

skill-name/

├── SKILL.md # Required: frontmatter + instructions

├── scripts/ # Optional: executable code

├── references/ # Optional: docs loaded on demand

└── assets/ # Optional: templates, icons, fonts

This is intentionally simple. cube2222 on Hacker News put it well: skills are "a much bigger deal long-term than MCP" because they're easy to author (just markdown at the basic level), context-efficient (lazy loading), and they compose naturally. An agent can load multiple skills for a single task without any of them knowing about each other.

The deeper insight is what NitpickLawyer pointed out in the same thread: "You can use the agents themselves to edit, improve, and add to the skills." Skills that teach agents how to create better skills. I've been doing exactly this in production for months, and the compounding effect is real. Context management is half the battle here, which is why understanding how agents manage their context window matters so much when designing skills.

Where skills end and MCP begins

There's a persistent confusion about whether skills replace MCP. They don't. They solve fundamentally different problems.

Skills are knowledge. They tell an agent how to approach a task: what patterns to follow, what mistakes to avoid, what tools to use and in what order. They're ephemeral context that the agent pulls in as needed.

MCP is connectivity. It gives an agent access to external systems: databases, APIs, SaaS tools. It's a structured protocol (JSON-RPC 2.0) for deterministic tool execution.

Elliott Girard from Towards AI framed it cleanly: "Most teams pick the wrong one." The decision tree is straightforward:

- Need to codify procedural knowledge? Write a skill.

- Need to connect to an external service? Build an MCP server.

- Have a complex MCP server that's hard to use correctly? Use both. Write a skill that teaches the agent how to use your MCP server effectively.

That third case is where it gets interesting. I run MCP servers for web search, code context retrieval, and deep research. Without skills describing when to use each tool and how to interpret results, the agent burns tokens calling the wrong tool or misreading outputs. The skill is the experience layer that makes raw tool access productive.

Simon Willison initially suggested skills might replace MCP entirely, citing MCP's token consumption issues. But MCP's progressive discovery update in January 2026 solved that problem. Both standards now handle context efficiently. They're complementary, not competing. I covered the production side of MCP in Building Production-Ready MCP Servers, and the skills layer is the missing piece that makes those servers actually usable by agents.

The capability illusion

Here's what the "skills + MCP" narrative misses entirely: neither one solves the orchestration problem.

Consider a customer refund agent. It needs to read a support ticket, verify a purchase, check refund eligibility, process the refund through Stripe, and send a confirmation email. Skills can teach it the refund policy. MCP can connect it to Stripe and the email service.

What happens when the Stripe API times out mid-refund? What happens when the agent sends the confirmation email before the refund actually processes? What happens when it misreads the ticket and processes a refund outside policy?

These aren't hypothetical failures. They're the default failure mode for every agent in production today. Hady Walied nailed it: "Today's frontier models can already solve most agent tasks in isolation. The failure mode isn't 'the model can't figure out what to do.' It's that agents fail in ways that are catastrophically expensive and impossible to debug."

The requirements that demos skip:

- Audit trails. Why did the agent make each decision?

- Error handling. Retry logic, timeouts, fallback paths.

- State management. What happens mid-transaction when something fails?

- Compliance. Can you prove the agent followed policy?

- Cost control. How do you prevent runaway token usage?

Most agent frameworks punt on all of these. You get a nice API for chaining LLM calls and calling tools. You don't get supervision, fault isolation, or transactional guarantees.

Jido and the OTP argument

The most interesting answer I've seen to the orchestration problem comes from an unexpected direction: Elixir.

Jido is an agent framework built on BEAM/OTP, the runtime that powers WhatsApp, Discord's backend, and most telecom infrastructure. The core pitch is that the problems agents face in production (concurrency, fault tolerance, state management, observability) were solved in the 1990s by Ericsson for telephone switches.

A Jido agent is an OTP process. If it crashes, its supervisor restarts it. If a subtask fails, the failure is isolated. State is persistent. OpenTelemetry is baked in from day one.

defmodule MyApp.RefundAgent do

use Jido.Agent,

name: "refund_agent",

description: "Processes customer refunds with audit trail"

use Jido.AI,

strategy: Jido.AI.Strategies.ReAct,

model: "anthropic:claude-sonnet-4-5"

schema: [

status: [type: :string, default: "pending"],

audit_log: [type: {:list, :map}, default: []]

]

signal_routes: [

{"refund.start", MyApp.Actions.VerifyPurchase},

{"refund.verified", MyApp.Actions.CheckEligibility},

{"refund.eligible", MyApp.Actions.ProcessStripeRefund},

{"refund.completed", MyApp.Actions.SendConfirmation}

]

end

The signal routing is the key pattern. Each step is a separate action with its own error handling. The coordinator doesn't move to the next step until the current one succeeds. If Stripe times out, the action retries according to OTP's backoff strategy. The confirmation email literally cannot send before the refund completes because the signal routing enforces the order.

Compare this to the typical Python agent loop:

result = await agent.run("Process refund for ticket #1234")

# Hope it works. Check logs if it doesn't.

I'm not saying everyone should rewrite their agents in Elixir. But the production patterns Jido demonstrates (supervision trees, signal-based coordination, isolated fault domains, mandatory audit trails) are what every serious agent framework needs, regardless of language.

What this means for your stack

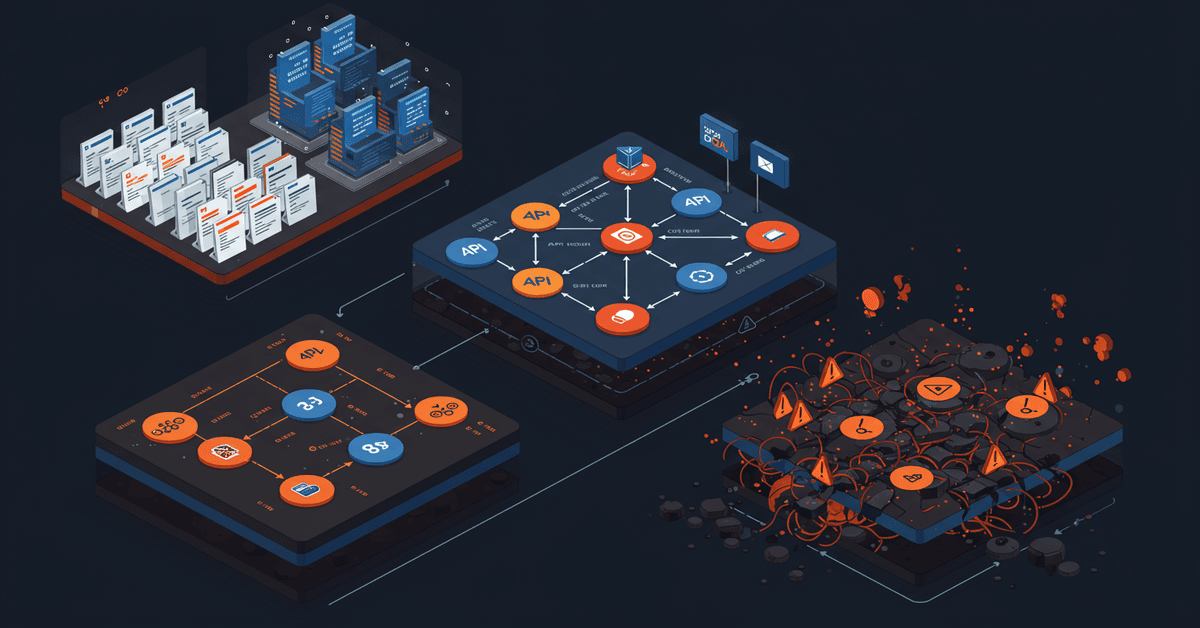

The agent capabilities stack has three layers, and most teams are only building the top two:

Layer 1: Capability (skills). How the agent knows what to do. This is mostly solved. Write a SKILL.md, drop it in a folder, and it works across agents. The open standard means your investment is portable.

Layer 2: Connectivity (MCP). How the agent talks to external systems. Also mostly solved. Build an MCP server once, connect it from any client. The N+M integration model replaced N*M custom integrations.

Layer 3: Orchestration. How the agent runs reliably in production. This is the gap. Most teams cobble together retry logic, logging, and state management ad hoc. The result is agents that work in demos and fail under real load.

If you're building agents for production today, my recommendation is blunt:

-

Use skills aggressively. Every team workflow, coding standard, and domain process should be a SKILL.md. They're cheap to write, easy to test, and portable across agents. Start with your most repetitive tasks.

-

Use MCP for external integrations. Don't reinvent connectivity. If an MCP server exists for your service, use it. If it doesn't, build one and open-source it.

-

Treat orchestration as infrastructure, not application code. Don't scatter retry logic and error handling across your agent prompts. Use a framework that handles supervision, state, and observability as first-class concerns. If you're in the Elixir ecosystem, Jido is worth evaluating. If you're not, look for frameworks that adopt the same patterns: process isolation, supervised execution, signal-based coordination.

-

Audit everything. The moment you deploy an agent that touches money, customer data, or external APIs, you need a complete decision trail. This isn't optional. It's the difference between "the agent did something weird" and "here's exactly what it decided and why."

The skills standard solved the capability packaging problem. MCP solved the connectivity problem. The orchestration layer is where the real engineering challenge lives, and it's where the next wave of production agent failures will happen if we keep ignoring it.

Build the third layer before you need it. You won't have time once the agent is processing refunds at 3 AM and the Stripe webhook starts failing.

Get new posts in your inbox

Architecture, performance, security. No spam.

Keep reading

I Built a Prompt Injection Firewall for MCP Servers

MCP servers have no input sanitization layer. Every JSON-RPC request flows straight from AI client to tool server, unfiltered. So I built one.

Building Production-Ready MCP Servers

MCP servers are everywhere. Production-ready ones aren't. Here's the architecture I use after running MCP in real workloads: error boundaries, state isolation, security hardening, and scaling patterns that actually hold up.

MCP Server Benchmarks Are Asking the Wrong Question

3.9 million requests across Java, Go, Node.js, and Python. Go wins on memory, Java on latency. But after running MCP servers in production for months, I think the benchmark misses what actually matters.